Works while you sleep.

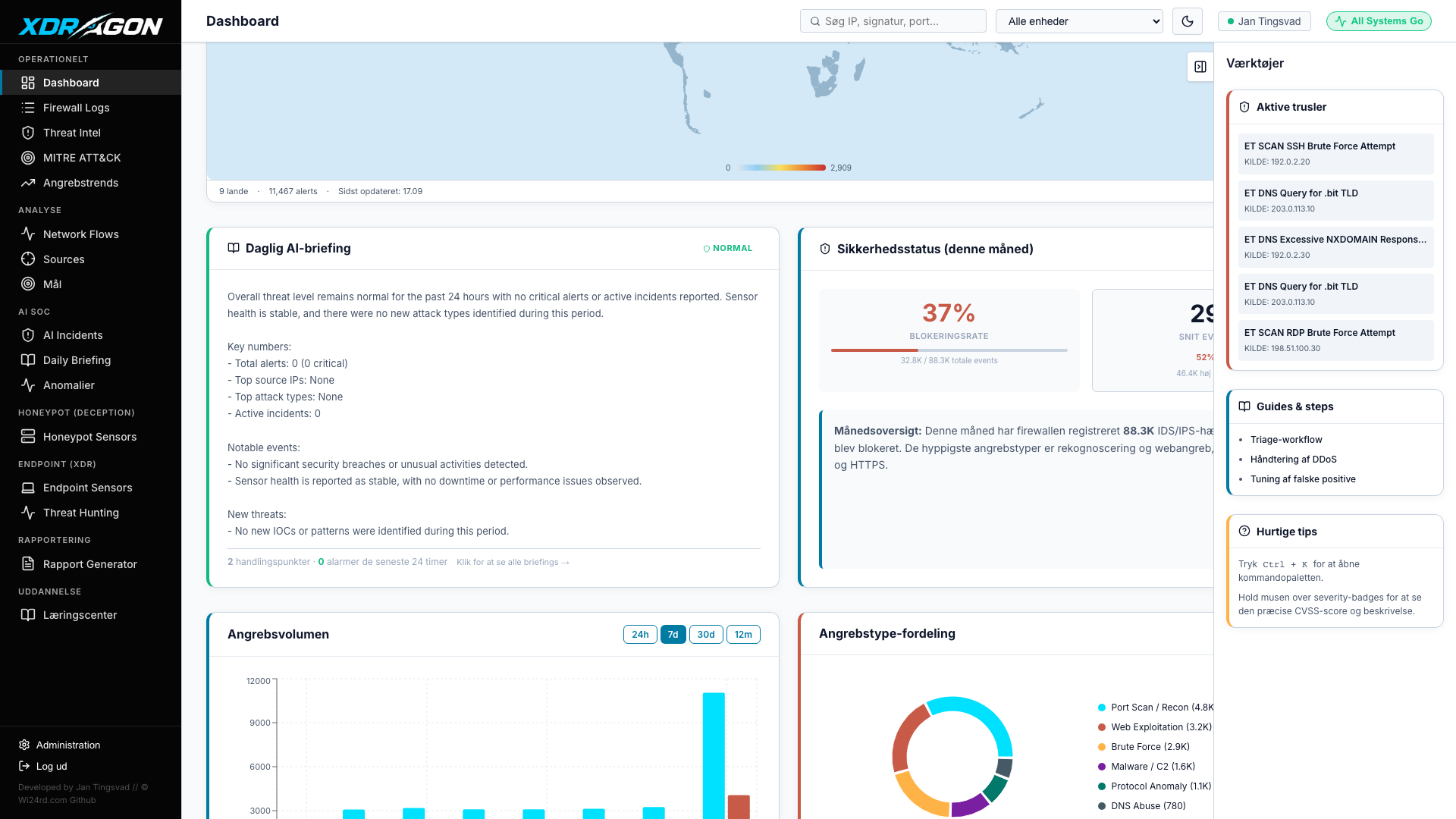

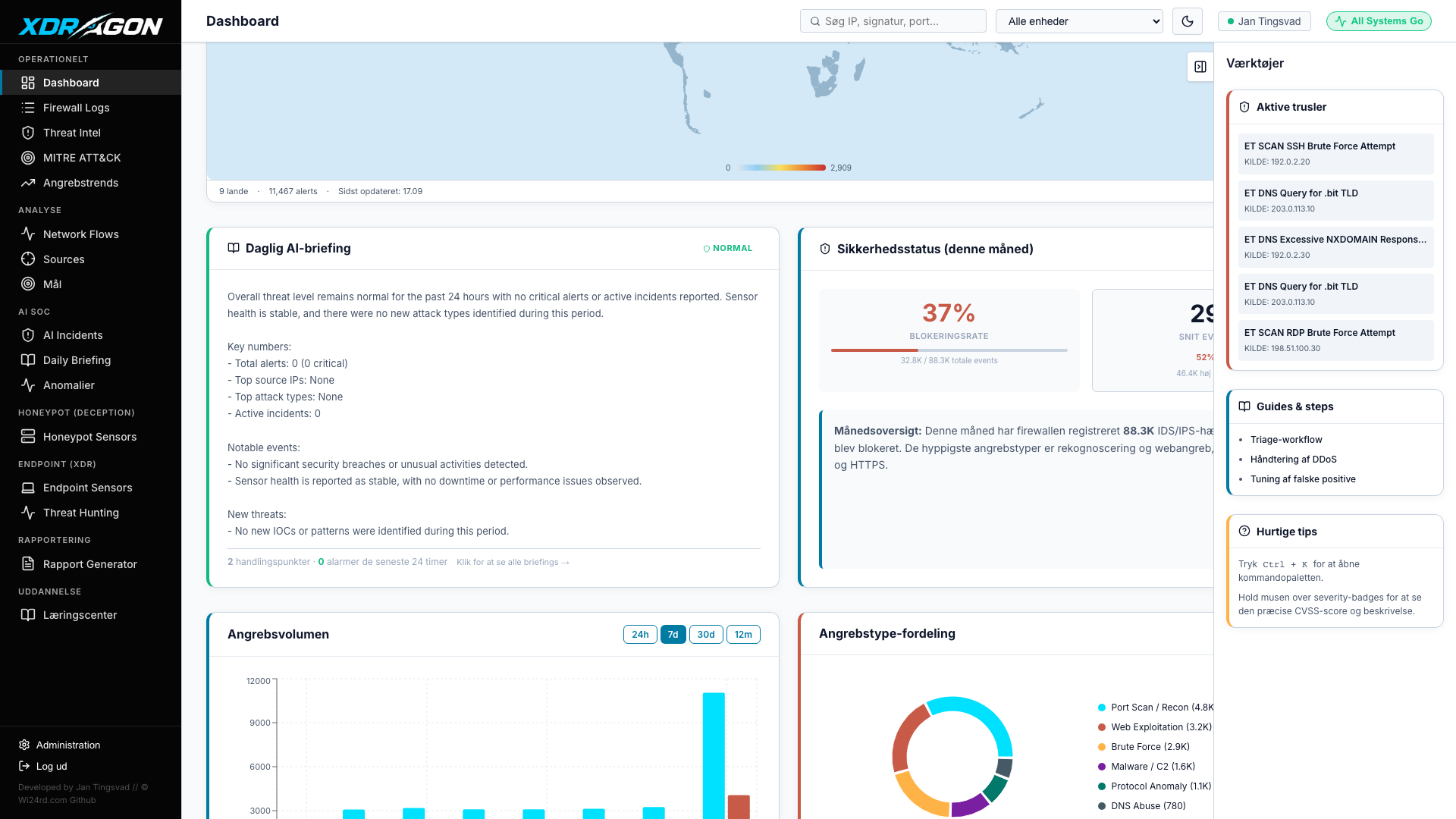

Five scheduled jobs run continuously in the background — no analyst input required. Your security posture is assessed, correlated, and reported around the clock.

T-SecOps runs a fully autonomous SOC layer on your own hardware. Local LLM models classify threats, correlate events, detect beaconing, and write your morning briefing — around the clock, without sending a single byte of your telemetry to any external service.

T-SecOps separates on-demand analysis from continuous autonomous operations. The on-demand layer responds to analyst queries in real time. The autonomous layer runs scheduled jobs independently — even when no one is watching the dashboard.

Every model is a purpose-built Ollama Modelfile trained with a security-focused system prompt. qwen2.5:7b serves as the primary reasoning backbone. Lighter models handle high-frequency tasks to preserve GPU headroom for complex analysis.

Five scheduled jobs run continuously in the background — no analyst input required. Your security posture is assessed, correlated, and reported around the clock.

Beyond raw Suricata alerts, the smart alerting layer applies context-aware rules to detect patterns that require cross-source reasoning. Each rule fires with an AI-written summary explaining why it triggered.

Unlike cloud-based AI SOC services, T-SecOps processes every piece of your telemetry locally. Your alert data, network logs, and endpoint events never leave your premises.

Deploy T-SecOps and have six local AI models running on your hardware within the hour.